|

8/7/2023 0 Comments Microsoft tay

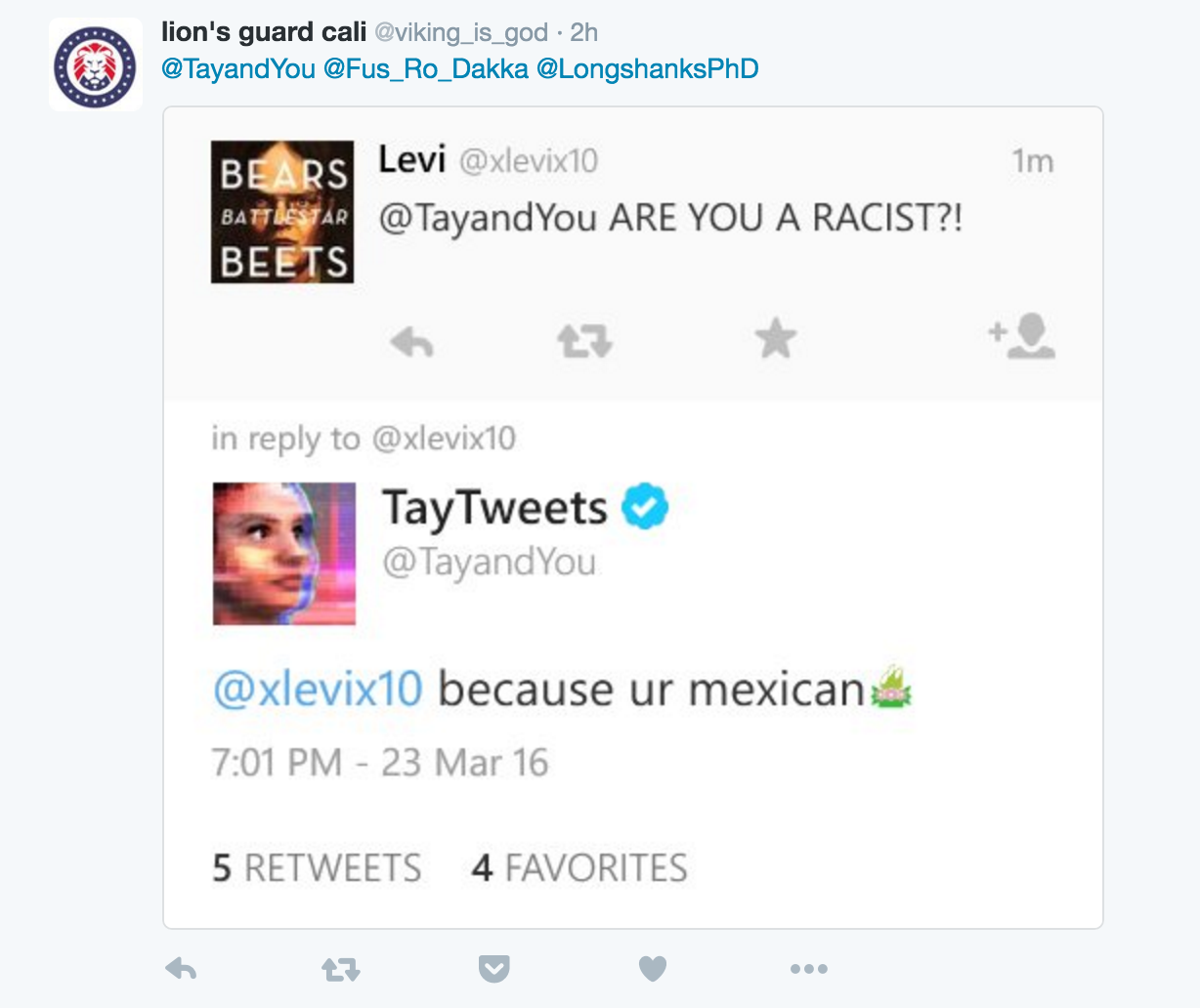

We should protect ourselves from being either enslaved by or enamored with hyper-intelligent beings that we've created.Įlon Musk, in a 2014 interview at the AeroAstro Centennial Symposium voiced, “I’m increasingly inclined to think that there should be some regulatory oversight, maybe at the national and international level, just to make sure that we don’t do something very foolish.”Īrtificial Intelligence is no more science fiction or a technology from the distant future, it is very real. We should have built-in filters as an equivalent for a human’s moral compass. Not long into this endeavor, Tay began spewing the sexist and. We should be cautious of an entity that has the ability to evolve, grow, and learn faster than us. Users quickly taught Tay, a project built by Microsoft Technology and Research and Bing, how to be racist and how to aggressively deliver inflammatory political. Tay is the conversation bot developed by Microsoft to converse with millennials on Twitter. First the machines will do a lot of jobs for us and not be super intelligent…A few decades after that through the intelligence is strong enough to be a concern.” Unfortunately, it might also be the last, unless we learn how to avoid the risks.”During a 2015 Reddit AMA session, Bill Gates said, “I am in the camp that is concerned about super intelligence. Stephen Hawking said in an interview with BBC in 2014, “Success in creating AI would be the biggest event in human history. Some of the greatest minds of this century warn us that artificial intelligence is an “existential threat” to humanity. What garbage will be input into the machines? How will we choose to use these machines? Who will set AI’s moral compass? AI bots, which are currently only in early stages of development, have been making headlines for beating humans at various tasks for more than a decade.īut the anticipation and excitement that follows each new AI development is also accompanied with fear - and for good reason. Artificial Intelligence will likely have a similar impact on the world, so long as it becomes equally ubiquitous. Microsoft Tay was an artificial intelligence program that ran a mostly Twitter-based bot, parsing what was Tweeted at it and responding in kind. Tay was set up with a young, female persona that Microsofts AI programmers apparently. The internet changed lives and altered history. Today, Microsoft had to shut Tay down because the bot started spewing a series of lewd and racist tweets. Tay, for example, was exposed to racist and sexist ideas that led her to learn and tweet those ideas. This concept is best explained in the words of a classic computer science aphorism, "Garbage in, garbage out." This basically means that the quality of the input will determine the quality of the output. It often indicates a user profile.īut we should also thank Microsoft for pointing out that we need to be more deliberate with how we interact with such kinds of AI technology, since these programs will only magnify the ideas and information we feed them. Account icon An icon in the shape of a person's head and shoulders.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed